Robustness of Graph Neural Networks at Scale

This page links to additional material for our paper

Robustness of Graph Neural Networks at Scale

by Simon Geisler, Tobias Schmidt, Hakan Şirin, Daniel Zügner, Aleksandar Bojchevski, and Stephan Günnemann

Published at the Neural Information Processing Systems (NeurIPS) 2021

Links

[Paper | GitHub | Video (Slideslive) ]

Abstract

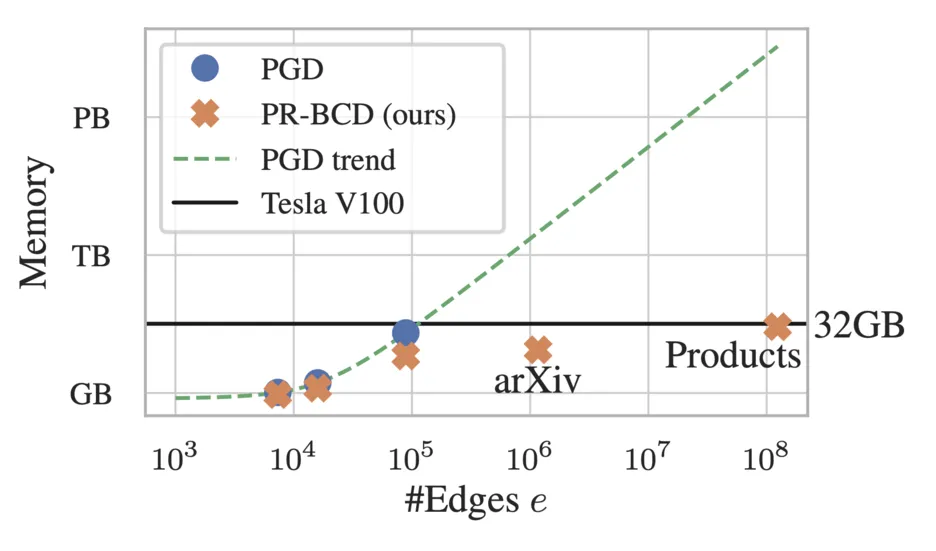

Graph Neural Networks (GNNs) are increasingly important given their popularity and the diversity of applications. Yet, existing studies of their vulnerability to adversarial attacks rely on relatively small graphs. We address this gap and study how to attack and defend GNNs at scale. We propose two sparsity-aware first-order optimization attacks that maintain an efficient representation despite optimizing over a number of parameters which is quadratic in the number of nodes. We show that common surrogate losses are not well-suited for global attacks on GNNs. Our alternatives can double the attack strength. Moreover, to improve GNNs’ reliability we design a robust aggregation function, Soft Median, resulting in an effective defense at all scales. We evaluate our attacks and defense with standard GNNs on graphs more than 100 times larger compared to previous work. We even scale one order of magnitude further by extending our techniques to a scalable GNN.

Cite

Please cite our paper if you use the method in your own work:

@inproceedings{geisler2021_robustness_of_gnns_at_scale,

title = {Robustness of Graph Neural Networks at Scale},

author = {Geisler, Simon and Schmidt, Tobias and \c{S}irin, Hakan and Z\"ugner, Daniel and Bojchevski, Aleksandar and G\"unnemann, Stephan},

booktitle={Neural Information Processing Systems, {NeurIPS}},

year = {2021},

}