Evaluating Robustness of Predictive Uncertainty Estimation: Are Dirichlet-based Models Reliable?

This page is about our paper

Evaluating Robustness of Predictive Uncertainty Estimation: Are Dirichlet-based Models Reliable?

by Anna-Kathrin Kopetzki*, Bertrand Charpentier*, Daniel Zügner, Sandhya Giri and Stephan Günnemann

Published at the International Conference on Machine Learning (ICML) 2021 (Spolight talk)

Abstract

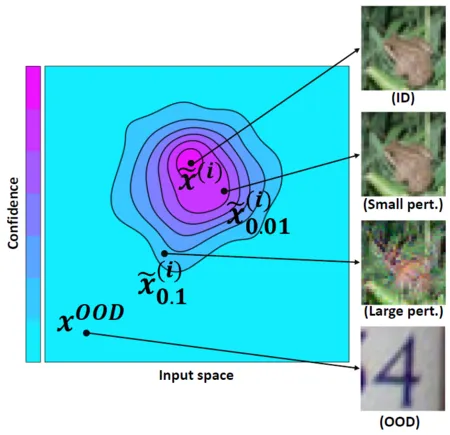

Dirichlet-based uncertainty (DBU) models are a recent and promising class of uncertainty-awaremodels. DBU models predict the parameters of aDirichlet distribution to provide fast, high-quality uncertainty estimates alongside with class predictions. In this work, we present the first large-scale, in-depth study of the robustness of DBU models under adversarial attacks. Our results suggest that uncertainty estimates of DBU modelsare not robust w.r.t. three important tasks: (1) indicating correctly and wrongly classified samples; (2) detecting adversarial examples; and (3) distinguishing between in-distribution (ID) and out-of-distribution (OOD) data. Additionally, we explore the first approaches to make DBU mod-els more robust. While adversarial training has a minor effect, our median smoothing based approach significantly increases robustness of DBU models.